LiteLLM vs LangChain: A Hands-On Comparison for Production AI Teams

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Most teams do not begin by carefully comparing LiteLLM vs LangChain. They begin by trying to get something working. One team reaches for LangChain because it makes complex LLM workflows easier to prototype. Another adopts LiteLLM because provider sprawl, inconsistent API access, and routing complexity have already become painful. The choice often feels obvious at first. It becomes less obvious later.

That is because LiteLLM and LangChain solve different problems, but they also create different kinds of operational gravity as AI workloads grow. The LangChain framework helps teams compose chains, agents, retrieval flows, and tool-driven business logic. LiteLLM helps them standardise provider access, route requests, and manage LLM providers through a cleaner interface. Both are useful. Both are widely used. Both can also become harder to live with once experimentation turns into infrastructure.

This comparison is not really about which tool has more features. It is about what each one costs in engineering time, maintenance effort, debugging complexity of multiple LLMs, governance overhead, and long-term flexibility once proof of concept gives way to production. For teams building serious AI systems, that is the comparison that matters.

.webp)

LiteLLM vs LangChain: What Each Tool Was Built For?

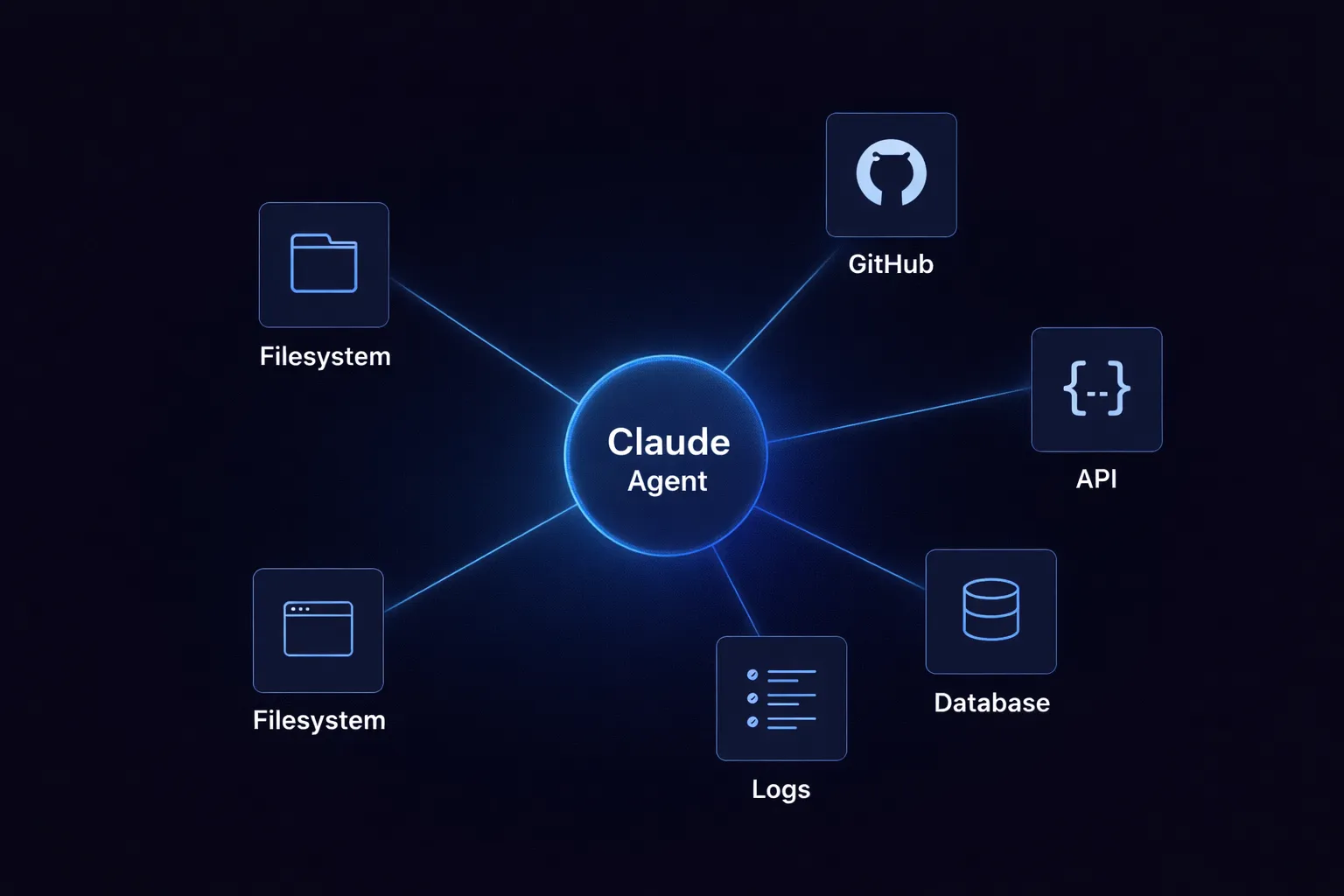

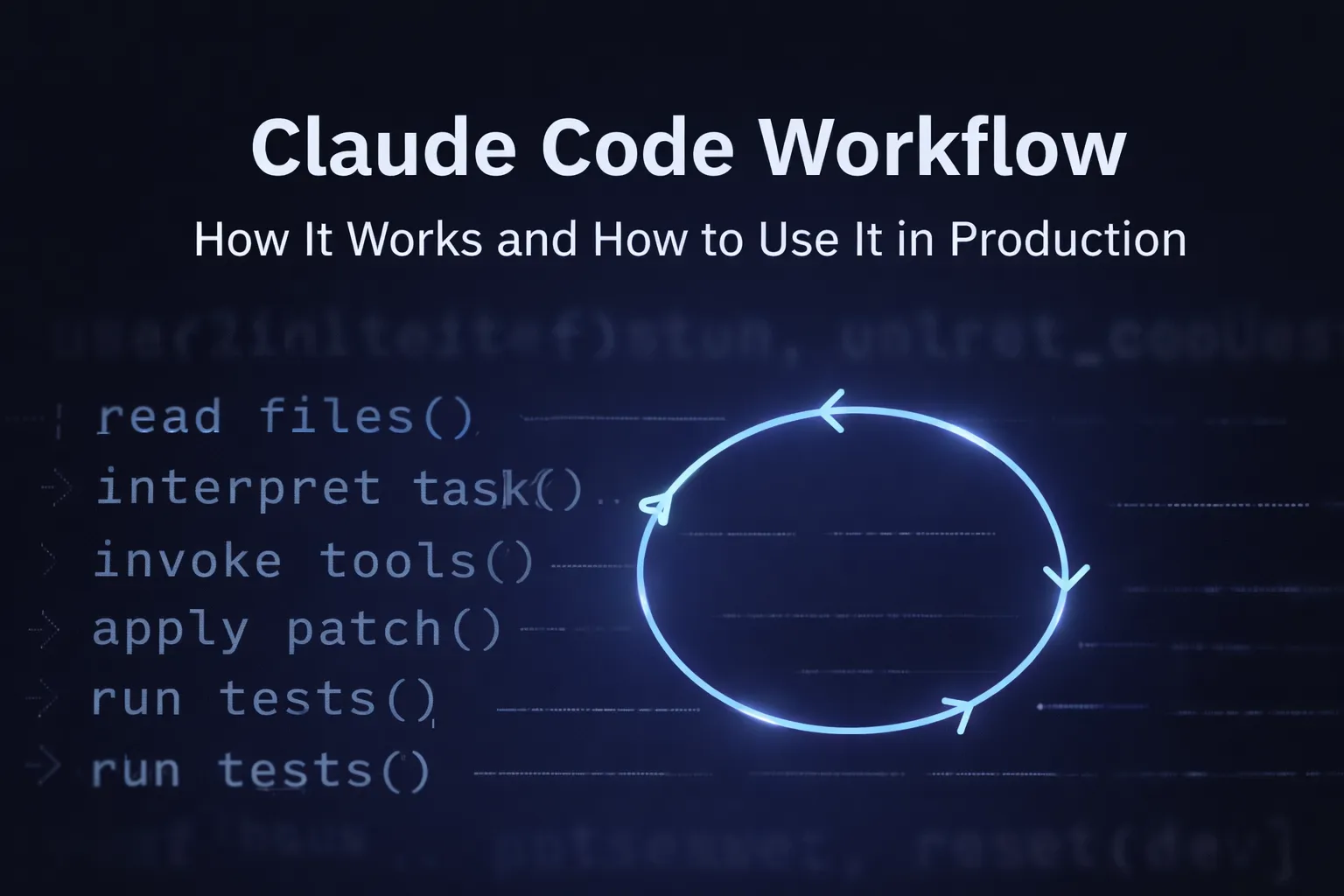

Before comparing LiteLLM and LangChain across production criteria, it helps to understand that they were designed to solve different problems. LangChain was built as an orchestration framework. Its purpose is to help developers compose multi-step AI workflows involving chains, agents, memory, retrieval, and tool use.

LiteLLM was built for a narrower but equally important job: standardising access to many LLM providers through a unified interface and proxy server, so teams can route requests, switch providers, and manage model access without rewriting application code.

Put simply, LangChain focuses on workflow composition, while LiteLLM focuses on model access and routing. That difference is the foundation for every trade-off that follows in production.

.webp)

Comparing LiteLLM vs LangChain Across What Matters in Production

The difference between LiteLLM and LangChain becomes much clearer once the conversation shifts from features to production realities. At that point, the real questions are no longer about what each tool can do in isolation, but how each one behaves under operational pressure, how much engineering effort it demands over time, and where hidden complexity begins to surface. Viewed through that lens, the contrast between them becomes much more meaningful.

Where LangChain Genuinely Helps and Where It Starts to Hurt?

LangChain earned its place in the first wave of LLM application development by making ambitious workflow design feel accessible. Teams could move from simple prompt engineering to chaining, retrieval, tool use, and agent-style behaviour without having to build every orchestration layer from scratch. That early speed is real. So is the convenience.

But the same abstractions that make LangChain attractive during prototyping can become harder to manage once reliability, traceability, and performance begin to matter in production.

The Case for LangChain in Early Development

LangChain earned its place in the first wave of LLM application development by making ambitious workflow design feel accessible. Teams could move from simple prompt engineering to chaining, retrieval, tool use, and agent-style behaviour without having to build every orchestration layer from scratch. That early speed is real. So is the convenience.

But the same abstractions that make LangChain attractive during prototyping can become harder to manage once reliability, traceability, and performance begin to matter in production.

What Breaks When LangChain Hits Production

- The abstraction layers that help during prototyping can turn into debugging obstacles in production.

- It gets hard to track which prompt was sent, what context was used, and why a chain failed.

- Upgrading versions often breaks things in your existing codebase, which adds to your maintenance workload.

- As performance needs grow, teams often end up rewriting key code from scratch.

- To see token costs, you need extra tools. Most teams set up their own dashboards and default budget systems because LangChain has no built-in budget controls.

.webp)

Where LiteLLM Fits Well and Where It Falls Short?

LiteLLM is appealing for the same reason many infrastructure tools are appealing: it takes a messy but common problem and makes it operationally cleaner. For teams working across multiple LLM providers, that simplicity is valuable. It reduces friction, lowers switching costs, and creates a more consistent access layer.

The challenge comes later, when that useful abstraction stops being a developer convenience and starts becoming shared infrastructure. At that point, the missing layers around governance, auditability, and control become much harder to ignore.

What LiteLLM Does Well?

LiteLLM works well because it solves a narrow but important production problem with unusual clarity. It standardises request formats across providers such as OpenAI, Anthropic, Azure, AWS Bedrock, and self-hosted models, which makes provider switching much less painful.

It also supports failover and load balancing with relatively little configuration, and its proxy server mode allows teams to introduce it into existing infrastructure without reworking the entire application stack. On top of that, LiteLLM gives teams much stronger visibility into spend by tracking usage by key, user, and team, while also supporting budget enforcement and detailed cost controls. Getting started with a basic Python script and a single pip install keeps the setup fast and the initial dependency footprint low.

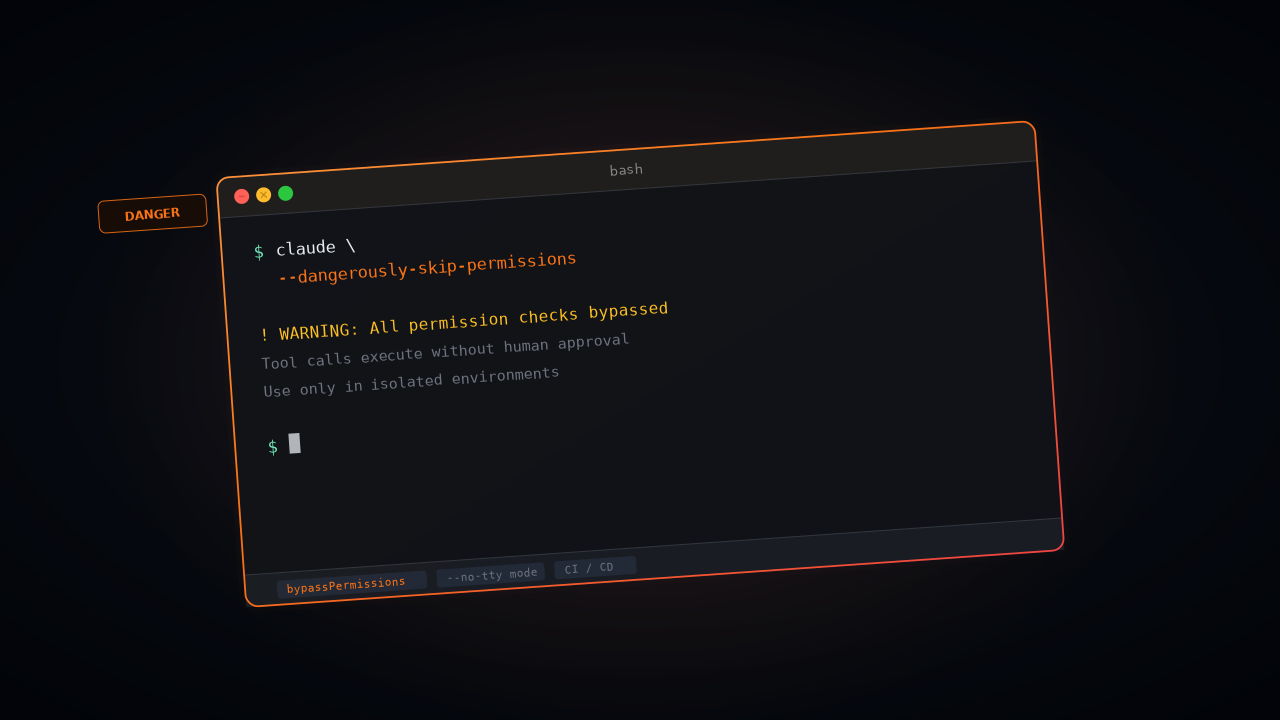

The Operational Ceiling Teams Hit

LiteLLM remains useful longer than most teams expect, but as it becomes shared infrastructure, operational complexity increases. Teams have to handle Redis state, routing rules, logging, failover, and other tricky edge cases as they turn a simple LiteLLM proxy into a full platform.

- Enterprise authentication, SSO, and audit logging are not built in by default.

- There is no native support for model hosting or serving; it routes all requests to external API endpoints.

- As teams need more governance, they end up building additional custom tools on top of LiteLLM.

.webp)

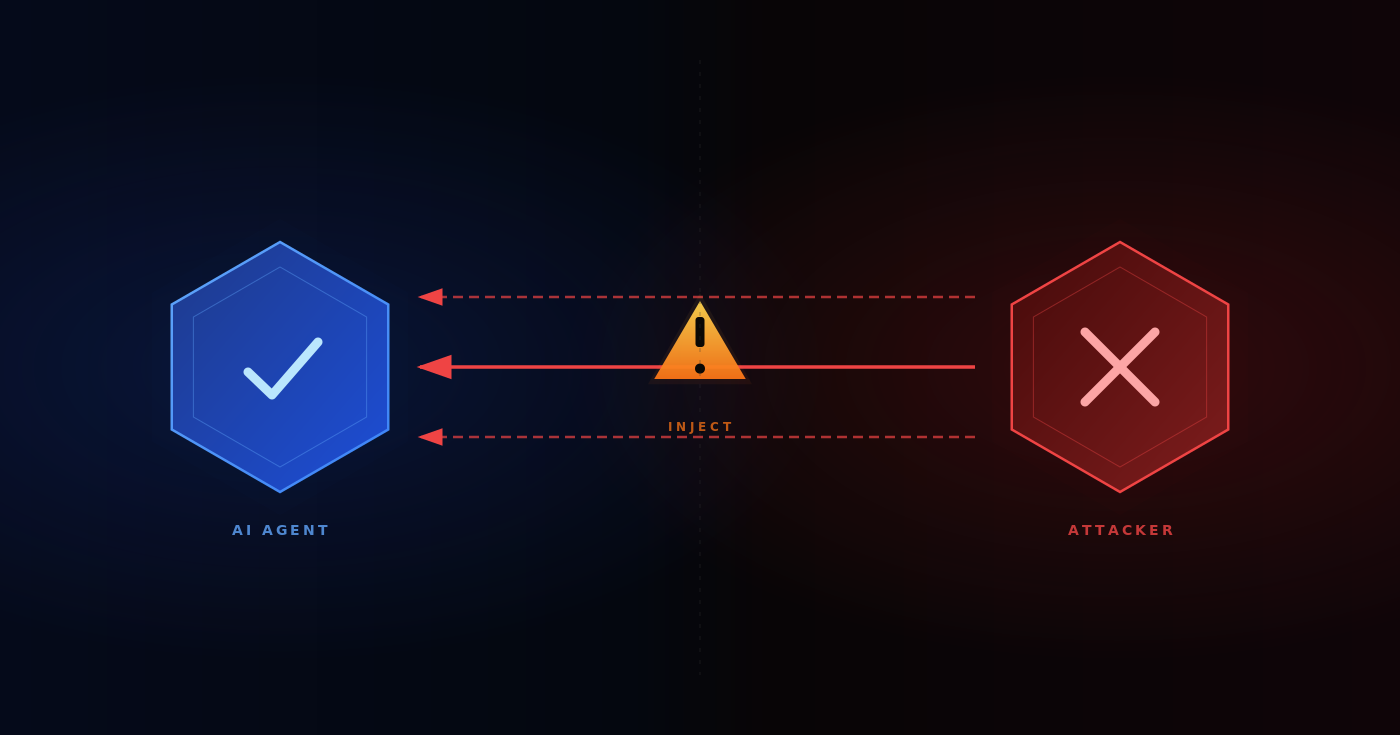

The Real Production Decision: Routing Layer, Orchestration Framework, or Both

Most teams avoid this question until they are already committed. In practice, the real issue is not simply whether LiteLLM or LangChain is better. It is whether routing and orchestration should remain separate concerns, whether combining both increases operational burden, and when a stitched-together stack becomes harder to manage than a unified platform.

For some teams, using LangChain and LiteLLM together makes sense because each tool handles a different layer of the problem. But that combination also creates a broader operational surface area, with separate upgrade cycles, debugging paths, and community dependencies. This is why many production teams eventually keep a routing layer while replacing framework-heavy orchestration with lighter custom logic that is easier to reason about and maintain.

.webp)

What Neither Tool Handles Well for Enterprise Teams?

The primary gap does not show up during early prototyping. It appears when model access becomes a shared platform concern and teams need to manage costs, policies, and auditability across different fields and business units. Comparing LiteLLM vs LangChain by features alone misses the requirements that emerge when AI assistant systems and complex applications operate in regulated or multi-team environments.

- Centralised cost governance: Neither tool natively supports per-team budget limits enforced at the infrastructure level.

- Audit trails for compliance: Logs exist, but building compliant, exportable audit records requires external pipelines in both cases.

- Model hosting and private deployment: Both tools assume various models are externally hosted; self-hosted or VPC-deployed models require additional architecture.

- Role-based access control across teams: Assigning different LLM access to different teams or complex applications is not a first-class feature in either tool.

- Unified observability: Getting a single view of prompt activity, cost, latency, and errors across providers requires custom server dashboards in both architectures.

.webp)

How TrueFoundry Addresses What LiteLLM and LangChain Leave Behind?

TrueFoundry addresses the operational gaps that arise when LiteLLM or LangChain are used as shared, multi-team infrastructure. Its features directly correspond to the missing capabilities outlined above.

- Unified gateway: Remove routing complexity with a single API surface that covers both public LLM providers, including OpenAI, Claude, Llama, and Gemini, as well as private and self-hosted models. No need to maintain a separate LiteLLM proxy infrastructure.

- Cost governance: Built-in token-level tracking, per-team budget enforcement, and usage breakdowns without exporting logs to external analytics tools. This is particularly valuable in regulated sectors such as healthcare, where cost accountability is a compliance requirement.

- Auditability, RBAC, and SSO: Role-based access control, SSO integration, and audit logging are built in, covering the governance gaps that require add-ons or custom pipelines in both LiteLLM and LangChain.

- Private model hosting: Deploy and serve models inside your own AWS, GCP, or Azure environment to keep data within your security perimeter. No external model hosting abstractions required.

- Toolchain consolidation: Routing, governance, cost tracking, and model serving are all handled in a single platform. This reduces operational complexity, limits upgrade overhead, and makes debugging easier than stitching together several separate tools.

Conclusion: Pick the Right Tool for Where You Actually Are

LangChain and LiteLLM both solve real problems, but they solve different kinds of problems, and that distinction matters more as systems mature. LangChain helps teams move quickly when they are designing workflow logic, especially in the early stages of experimentation. LiteLLM helps teams simplify LLM providers access, routing, and spend visibility when model usage begins to spread across AI applications and environments. But production artificial intelligence rarely stops at orchestration or routing alone.

As usage grows, teams usually need stronger governance, clearer cost controls, tighter access management, and a more reliable operational surface than either tool provides on its own. If you are still prototyping, LangChain can accelerate the path forward. If your immediate need is clean multi-provider routing, LiteLLM is a sensible starting point. But if your team needs routing, governance, cost visibility, and model hosting to work together without becoming a patchwork of tools and custom controls, a managed platform like TrueFoundry becomes the more durable choice.

Frequently Asked Questions

What are the key differences between LiteLLM and LangChain?

LiteLLM and LangChain sit at different layers of the stack. LiteLLM standardises access to many model providers and gives teams a cleaner routing surface, while LangChain helps compose multi-step application logic such as chains, agents, retrieval flows, and tool use. One solves provider access. The other solves workflow composition.

Does LangChain use LiteLLM?

Not by default. They solve different layers of the stack. LangChain is typically used for orchestration, while LiteLLM serves as provider abstraction and routing. Some teams deliberately combine them: LangChain orchestrates the workflow, and LiteLLM handles provider failover and unified API calls. The trade-off is that each layer introduces its own debugging surface, upgrade path, and operational assumptions.

Is LiteLLM similar to LangChain?

Not really. LiteLLM is focused on making LLM provider integration, routing, cost tracking, and failover simple and uniform. LangChain is focused on making complex, multi-step prompt workflows, chaining, and agent logic easy to prototype. Most production teams that use both eventually find themselves carving out which parts of the stack each tool owns.

At what team size or traffic level should you move beyond LiteLLM for production AI?

LiteLLM stays elegant for small teams or single workloads, but once you need enterprise governance, centralised cost control, access policies, or unified audit logs, you’re in custom tooling territory. The tipping point is usually when LLM access becomes a product surface or a shared platform across teams. At that point, the cost of homegrown governance often exceeds the cost of adopting a managed AI gateway.

Can LangChain and LiteLLM be replaced by a single managed AI platform?

For most production teams, yes. Unified platforms like TrueFoundry are designed to consolidate routing, governance, cost visibility, and model serving into a single place, reducing the need to stitch together multiple tools and custom control layers. The result is fewer upgrade cycles, a single debugging surface, and less maintenance debt at scale.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

.webp)

.webp)

.webp)

.webp)

.webp)